Let’s not beat around the bush, shall we? If there’s one programming language you simply must embrace as an SEO, it’s Javascript.

After all, Google can successfully crawl Javascript (and does it better and better). In fact, the search engine was able to index dynamic content as early as in 2008, albeit in a limited fashion.

But optimizing dynamic content requires having at least a basic understanding of the technology behind it.

In this post, we'll cover why embracing Javascript will give you a distinct advantage to boost online visibility.

Note: Throughout the post, I will be using both Javascript and its abbreviation, JS to denote the programming language.

Today, the majority of websites require the use Javascript to add action, interactive elements, and display dynamic content.

Multiple libraries and frameworks exist to help developers bring dynamics to the web. The most popular include jQuery, JSON, React, Mootools, Single Page Apps, and more.

Google, JavaScript, and SEO

But in spite of the Javascript’s popularity with developers, there’s an ongoing debate among SEO as to whether Google can actually properly crawl and index JavaScript content.

We know that around ten years ago, the search engine started crawling and indexing content rendered with JS. Although, at the time, their abilities were quite limited.

And although Google has made tremendous progress in the area (depreciating the old AJAX crawling scheme is one example), many SEOs still aren’t sure about the search engine’s ability to read JavaScript.

Ensure Bots Read Your Site Like Your Users

One way to ensure that is by using dedicated tools to crawl JS on your pages to establish if no issues prevent bots from rendering dynamic content.

Our site audit technology, Clarity Audits, for example, can handle crawling JS on individual pages, allowing you to identify problems that could block search engines from accessing your entire content.

Javascript also affects some of the rankings factors, Page Speed being a critical one.

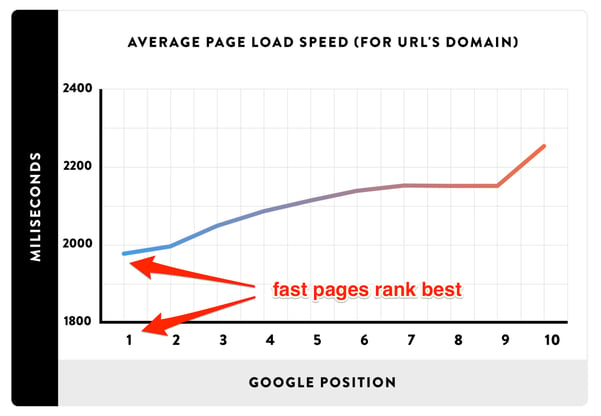

Fact: Page speed affects your page’s rankings.

Just take a look at this graph plotting page load time vs. page position in the SERPs to see how much of an impact it can have on online visibility.

And Javascript files can seriously affect how fast a page can load. Particularly, if you're loading them from an external file.

For that reason, you should serve JS files directly in the page source (meaning that they are included in the main code that makes up the page, rather than in external files a browser needs to call out and load before rendering the page in full.)

Also, rendering files on-page means that Googlebot will have fewer assets to crawl, resulting in a better utilization of the available crawl budget.

Javascript and Page Speed

How do you know if JS files affect your page speed?

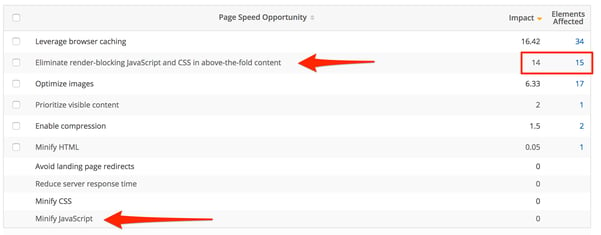

One way is to use tools like seoClarity’s Page Speed Analysis to discover your current page loading time and get the full list of files that could be slowing down your site.

Here’s how such report looks like, with .js files marked.

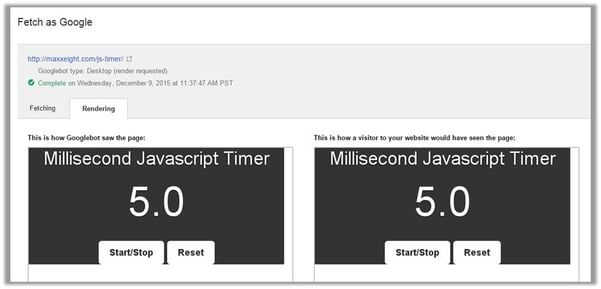

There’s another aspect of optimizing the Javascript speed - Google will only analyze the content loaded within the first 5 seconds of bots presence on a page. In other words, it will not include any JS event that occurs or fires up after that time in the Javascript crawl screenshot.

Here’s a great timer test created by Max Prin:

In other words, optimizing page speed ensures that the most of your content gets included in the snapshot Google takes to crawl and index JS.

Single Page Applications

If your organization uses Single Page Applications, then you also need to optimize its JS.

Single Page Applications are web applications that load a single HTML page and update it dynamically without reloading through various frameworks, like Angular.js, Ember or Knockout.

Recommended Reading: Optimize AngularJS SEO for Crawling and Indexing

However, with so much of SPA content loaded dynamically on a page, there’s not much for search engines bots to crawl, index and cache.

One way to overcome this challenge is to render the most crucial on-page SEO elements (i.e. H1 tags, page title, etc.) as a static code. This way, they’ll remain visible to bots, regardless of the dynamically changing content. This is commonly referred to as Initial Static Rendering (see example below).

As Keith Horwood, a technical lead at Storefront points (note, the emphasis in bold is mine):

“Separate your concerns! How would you build your website if you just cared about branding and wanted people to download your app? Build that website. Keep your branding and archivable content separate from your application.”

Also, refrain from using JS onclick events to interlink pages on your site.

Most of my advice to this point related to load events - scripts trigger when a page is loading in the browser.

But users can generate events on page as well. The most common behavior is an onclick event - a dynamic link triggered by JS. And although it might add dynamics to your website, you should avoid using it for interlinking pages on your site.

Because, although Google will find those links, it won’t associate them with your site’s navigation, and thus, won’t build a proper image of your site’s structure and content hierarchy.

Closing Thoughts

This post serves as an introduction to Javascript for SEO. After reading it, you should have a good idea why you should embrace the programming language into your skill set.

And in my upcoming article, I’ll dive deeper into SPAs and show you specifically how to optimize them for SEO.

3 Comments

Click here to read/write comments