Site audits are an essential part of SEO. Regularly crawling and checking your site to ensure that it is accessible, indexable, and has all SEO elements implemented correctly can go a long way in improving the user experience and rankings.

Yet, when it comes to enterprise-level websites, the crawling process presents a unique set of challenges.

The aim is to run crawls that finish within a reasonable amount of time without negatively impacting the performance of the site.

In this blog, we'll provide tips on how to turn this goal into a reality.

Recommended Reading: The Best SEO Audit Checklist Template to Boost Search Visibility and Rankings

Table of Contents:

The Challenges of Crawling Enterprise Sites

Enterprise sites are constantly being updated. To keep up with these never-ending changes and identify any issues that come up, you need to audit your site regularly.

But, as previously stated, it can be difficult to run crawls on enterprise sites due to their massive number of pages and complex site architecture. Time and resource limitations, stale crawl results (i.e. if a crawl takes a long time to run, things could have changed by the time it’s finished), and other challenges can make running crawls a real headache.

However, these challenges should not deter you from crawling your site regularly. They are simply obstacles that you can learn about and optimize.

Reasons Why It's Important to Crawl Large Websites

There are many reasons why you need to crawl an enterprise site regularly. These include:

- Uncovering Technical Issues: Ensure that all technical SEO elements are implemented correctly. Having a crawler that not only crawls your site but also provides you with a list of both on- and off-page issues is critical.

- Search Engine Perspective: Utilizing crawlers that crawl a website like Googlebot would allows you to understand how search engines view your site, helping you to make proactive adjustments.

- Pre- and Post-Migration Checks: Running crawls pre- and post-migration is a great way to ensure that the website changes do not affect search engine visibility.

- Ensuring Content Relevancy: Auditing particular elements of your site, such as images, links, video, etc. helps ensure the information on the site is relevant.

- Historical Snapshots: Running site audits regularly provides snapshots of the site which you can reference over time to identify trends or report on long-term success.

- Global Optimization: For international sites, ensuring the correct language and regional version of your site is presented to users worldwide via hreflang tags is crucial.

- Canonicalization: Regular canonical audits help confirm that search engines and users can access and index your site's preferred pages.

Recommended Reading: SEO Crawlability Issues and How to Find Them

What to Consider When Crawling Enterprise Sites

To ensure the crawl doesn’t interfere with your site’s operations and pulls all the relevant information you need, there are certain questions you need to ask yourself before you run your crawl.

#1. Should I run a JavaScript crawl or a standard crawl?

Determining the type of crawl is one of the most important factors to consider.

When setting up a crawl, you have two types of crawl options to choose from: standard or JavaScript.

Standard crawls only crawl the source of the page. This means that only the HTML on the page is crawled. This type of crawl is quick and is typically the recommended method, especially if the links on the page are not dynamically generated.

JavaScript crawls, on the other hand, wait to render the page as they would within a browser. They are much slower than regular crawls. As such, they should be used selectively. But as more and more sites have started to use JavaScript, this type of crawl may be necessary.

Google began to crawl JavaScript in 2008, so the fact that Google can crawl these pages is nothing new. However, the problem was that Google was not able to gather a lot of information from JavaScript pages, which limited the pages’ ability to be rendered and found over HTML websites.

Now, however, Google has evolved. Sites that use JavaScript have begun to see more pages crawled and indexed which means that Google is evolving its support of this language.

If you are not sure which type of crawl to run on your site, you can disable JavaScript and try to navigate the site and its links. Do note: sometimes JavaScript is confined to content and not links, so in that case it may be okay to set up a regular crawl.

You can also check the source code and compare it to links on the rendered page, inspect the site in Chrome, or run test crawls to determine which type of crawl is best suited for your site.

#2. How fast should I crawl?

Typically, crawl speed is measured in pages crawled per second and is the number of simultaneous requests to your site.

We recommend fast crawls since a longer run time means you'll have older, potentially stale, results once it's complete. As such, you should crawl as fast as your site allows you to. Crawling using a distributed crawl (i.e. crawls using multiple nodes with separate IP's that run multiple parallel requests against your site,) could also be used.

However, it is important to know the capabilities of your site infrastructure. A crawl that runs too fast can hurt the performance of your site.

Keep in mind that it is not necessary to crawl everything on your site – more on that below.

#3. When should I crawl?

Although you can run a crawl at any time, it’s best to schedule it outside of peak hours or days.

Crawling your site during times when there is lower traffic reduces the risk that the crawl will slow down the site’s infrastructure.

If there is a crawl occurring at a high traffic time, the network team may rate limit the crawler if the site is being negatively impacted.

Recommended Reading: What Are the Best Site Audit and Crawler Tools?

#4. Does my website block or restrict external crawlers?

Many enterprise sites block all external crawlers. To ensure that your crawler has access to your site, you’ll have to remove any potential restrictions before running the crawl.

At seoClarity, we run a fully managed crawl. This means there aren't any limits on how fast or how deep we can crawl. To ensure seamless crawling, we advise whitelisting our crawler — the number one reason we’ve seen crawls fail is because the crawler is not whitelisted.

#5. What should I crawl?

It’s important to know that it is not necessary to crawl every page.

Dynamically generated pages change so often that the findings could be obsolete by the time the crawl finishes. That's why we recommend running sample crawls.

A sample crawl across different types of pages is generally enough to identify patterns and issues on the site. You can limit it by sub-folders, sub-domains, URL parameters, URL patterns, etc. You can also customize the depth and count of pages crawled. At seoClarity, we set 4 levels as our default depth.

You can also implement segmented crawling. This involves breaking down the site into small sections that represent the entire site. This offers a trade-off between completeness and the timeliness of the data.

There will, of course, be some cases where you do want to crawl the entire site, but this depends on your specific use cases.

#6. What about URL parameters?

This is a common question that comes up, especially if your site has faceted navigation (i.e. filtering and sorting results based on product attributes).

A URL parameter is information from a click that’s passed on to the URL so it knows how to behave. They are typically used to filter, organize, track, and present content, but not all parameters are useful in a crawl.

They can exponentially increase crawl size, so they should be optimized when setting up a crawl. For example, it is recommended to remove parameters that lead to duplicate crawling.

While you can remove all URL parameters when you crawl, this is not recommended because there are some parameters you may care about.

You may have the parameters you want to crawl or ignore loaded into your Search Console. If this information is set up, you can send it to us and we can set it up for our crawl too.

How seoClarity Makes it Easy to Crawl Large Websites

As you’ve seen, a lot can go into setting up a crawl. You want to tailor it so that you’re getting the information that you need — information that will be impactful for your SEO efforts.

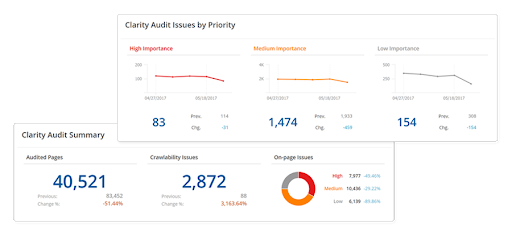

Fortunately at seoClarity, we offer our site audit tool, Clarity Audits, which is a fully managed crawl.

We work with clients and support them in setting up the crawl based on their use case to get the end results they want.

Clarity Audits runs each crawled page through more than 100 technical health checks and there are no artificial limits placed on the crawl.

You have full control over every aspect of the crawl settings, including what you’re crawling, the type of crawl (i.e. standard or JavaScript), the depth of the crawl, and the crawl speed. We help you optimize and audit your site, thus helping with the overall usability of the site.

Our Client Success Managers also ensure you have all of your SEO needs accounted for, so when you set up a crawl, any potential issues or roadblocks are identified and handled.

Want to perform a full technical site audit but are unsure where to begin?

Use our free site audit checklist to guide you through each step of the process.

Conclusion

It’s important to tailor a crawl to your specific situation so that you get the data that you’re looking for, from the pages that you care about.

Most enterprise sites are, after all, so large that it only makes sense to crawl what is relevant to you.

Staying proactive with your crawl setup allows you to gain important insights while ensuring you save time and resources along the way.

At seoClarity, we make it easy to change crawl settings to align the crawl with your unique use case.

<<Editor's Note: This piece was originally published in February 2020 and has since been updated.>>

Comments

Currently, there are no comments. Be the first to post one!