For years, SEO was a game of visibility. We built webpages, optimized for Google, and trusted that search engines would direct users to our site.

AI search has fundamentally upended that.

Instead of just referring people to your website, AI search engines now act as a synthesizer, summarizing data from multiple sources it considers "authoritative."

The result? Your pricing, features, and even your customer service numbers are being overridden by a potentially inaccurate consensus of third-party websites.

Our guide breaks down the core challenges of AI misrepresentation and provides a tactical roadmap to help you reclaim your brand narrative and secure your status as the definitive source of truth.

If you prefer to watch our full discussion with the CEO of BOL Agency on these shifts, you can check out our on-demand webinar here:

Table of Contents:

The Meteoric Rise of AI Search

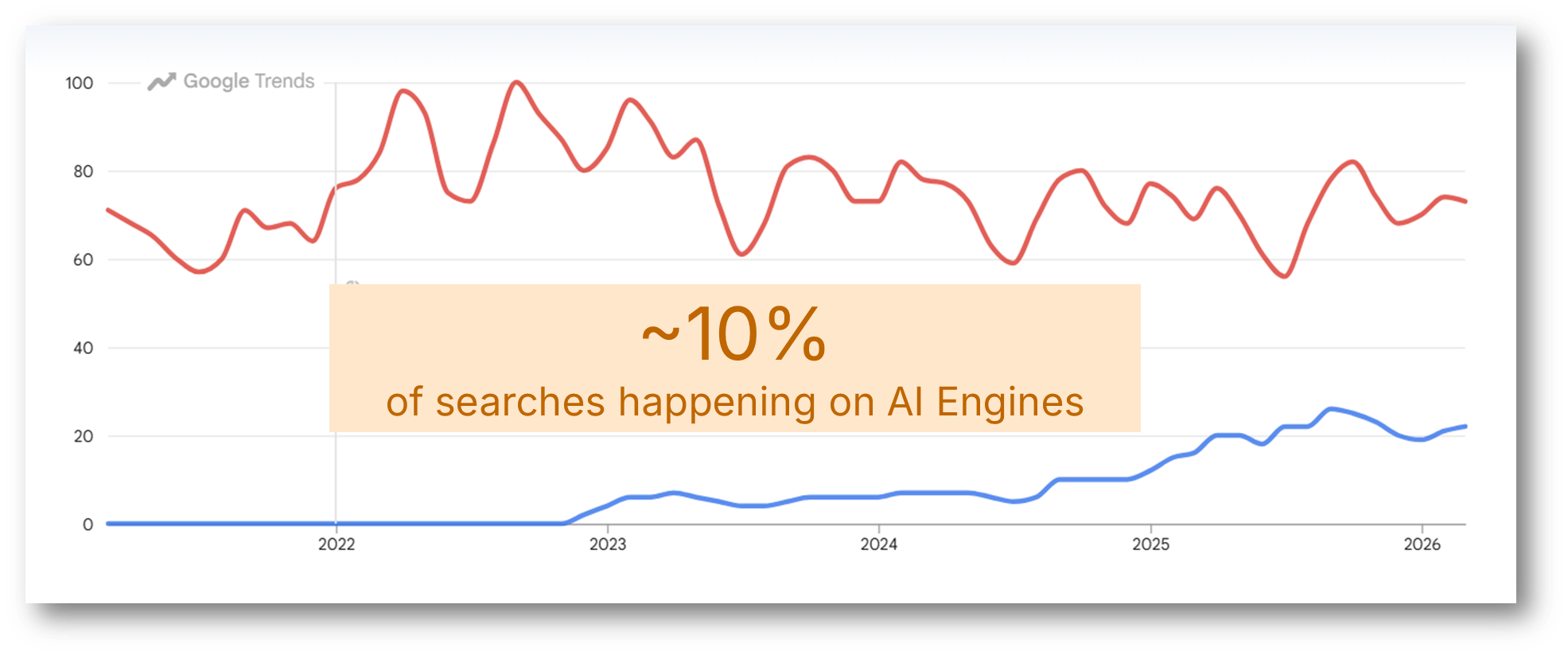

While Google’s traffic patterns have remained relatively stable, AI search is experiencing a meteoric rise. To understand the scale of this shift, we only need to look at the data:

- 10% of All Searches: Our research indicates that approximately 10% of all searches now happen on AI engines rather than traditional search engines.

- The Growth Gap: While traditional search traffic remains relatively flat, the trend line for AI search since the end of 2023 shows a vertical climb that traditional search can’t match.

- The Mainstream Shift: From ChatGPT to Perplexity and Gemini, generative AI responses are becoming ubiquitous. This isn't just a niche tool for tech enthusiasts; it is the new front line of consumer discovery.

Google Trends chart showing traditional search traffic (red) compared to AI search traffic (blue).

This growth is exciting, but it brings a significant "blind spot" for marketers: Accuracy.

The Brand Inaccuracy Problem In AI Search

The industry has spent the last year obsessed with whether a brand is mentioned or cited in AI search. While visibility matters, the real challenge is narrative control.

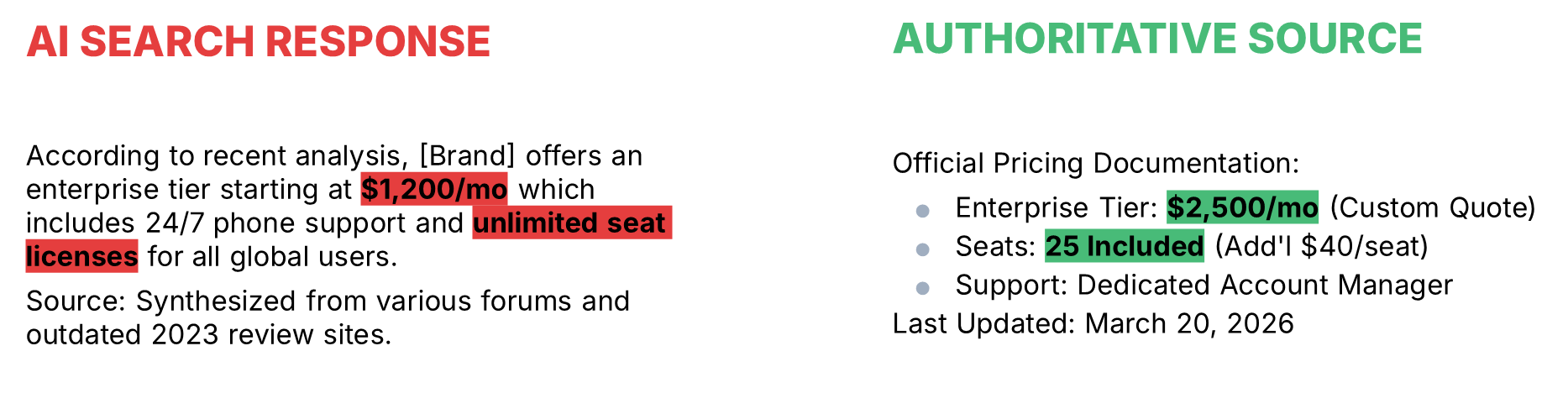

When an AI search engine summarizes your brand, it pulls from a wide variety of sources across the web. If third-party sites contain stale or incorrect data, the AI answer will confidently present that misinformation as fact.

Over the past 8 months, we have run checks on thousands of branded prompts, and our research shows that 40% of AI responses contain an inaccuracy regarding brand-specific questions.

Real-World Examples of AI Hallucinations that Cost Businesses

- Retail Hours: An AI Overview for a local Home Depot claimed the store closed at 9:00 PM. In reality, the store’s own site confirmed it was open until 10:00 PM. That lost hour represents a significant upper funnel impact on customers who stayed home based on a false summary.

- B2B Capabilities: A search for whether a cybersecurity company, Cynet, supports Linux returned an AI response stating it does not, despite citing a Cynet blog post that explicitly stated they do support it.

- Product Roadmaps: Microsoft’s Copilot recently claimed "Gemini 3.5" had been released. At the time, Gemini 3.1 was the latest model. The AI answer simply invented a version that didn't exist.

How ArcAI Accuracy Helps Solve the Brand Misrepresentation Challenge in AI Search

To help enterprises combat the rise of AI hallucinations, we built Arc AI Accuracy which identifies brand misrepresentations across all major AI search engines like ChatGPT and Perplexity.

By automatically checking generative responses against your authoritative documentation, it pinpoints exactly where and why the AI answer got your brand story wrong.

This provides teams with a scaled, data-driven way to quantify reputation risk and correct the narrative before it impacts your bottom line.

Why AI Search Engines Get Your Brand Information Wrong

Large Language Models (LLMs) tend to behave remarkably similar to a 12-year-old who hasn’t studied for a test, but refuses to admit it. Here’s why:

- They always have an answer: LLMs almost never say "I don't know." They are prediction engines, not fact engines. They keep talking even when they’re totally off-base.

- They’re scary confident: They deliver these answers with such authority that it’s easy to believe them. They look and sound right, even when they’re making things up.

The Main Drivers of AI Search Inaccuracy

So, why do AI search engines get brand details wrong so frequently? It usually comes down to these three things:

- Data vs. Facts: LLMs are driven by the retrieval of data and the training of datasets. They are prediction engines based on math; they don't "know" facts, they predict the next likely word.

- The Authority Vacuum: Nature abhors a vacuum, and so do LLMs. If you don't provide a clear answer on your site (e.g., hiding pricing), AI will fill that vacuum with whatever it finds on Reddit, Wiki, or competitor sites.

The question then becomes, what do we do about this?

How to Secure Brand Authority and Fight Inaccuracy in AI Search

To fight back, enterprises must move beyond traditional SEO and adopt a GEO (Generative Engine Optimization) motion. This requires a shift in how we architect and protect our digital assets.

The 5 Pillars of an Effective GEO Strategy

At a high level, the five most important elements to consider when developing a successful GEO strategy include:

- Technical Readiness: Understanding the math of AI, including vector databases (the distance between concepts) and query fanouts (where AI rewrites a user prompt into a series of iterations).

- Content Utility: Providing compelling, high-density content that is indexable for AI search bots but engaging for humans.

- Go-to-Market Alignment: Using prompt data to understand the buyer's mindset and inform your messaging architecture.

- Measurement: Moving away from simple click-through rates and focusing on share of voice and citation accuracy within AI engines.

- Systems Design: Creating new internal processes to own the brand narrative across the entire "agentic" web.

Tactical Roadmap: Executing a GEO Strategy to Reduce Brand Misrepresentation

If you are seeing your brand misrepresented, follow these six stages to regain control:

#1: Discovery & Persona Prompting

Use persona-based prompting to see what AI search says about you to different types of buyers. Audit where you are being cited and where you are missing in relation to your competitors.

#2: Content Optimization

Identify "low-hanging fruit." Audit existing content for stale data and ensure your technical foundation (like robots.txt) allows AI crawlers to see your "truth" easily.

#3: Gap Analysis

Where is the AI making things up? This usually indicates a content gap. Create the missing documentation so the LLM has a source to pull from.

#4: Data-Driven Analysis

Monitor your "prompt landscape." Track which keywords you "own" in AI responses and where competitors are successfully spreading disinformation.

#5: Content Creation & Expansion

Double down on your wins. If you are cited for a specific feature, build out more exhaustive data around that topic to solidify your position as the authoritative source.

#6: Digital PR & Amplification

AI search prioritizes "consensus." Ensure your narrative lives on the third-party authoritative sources (Reddit, industry journals, etc.) that LLMs use to validate facts.

Conclusion: Fueling the Answer

We are moving into a world where having the information "out there" is better than keeping it close to the vest.

If you don't provide the answer, AI search engines will find a "reasonable" (and likely wrong) answer elsewhere.

By owning your story and providing the data search engines need, you can stop being a victim of AI hallucinations and start driving growth by being the most trusted answer on the web.

Ready to see where AI search is getting your brand wrong & correct the narrative? Demo Arc AI Accuracy Now!

Comments

Currently, there are no comments. Be the first to post one!